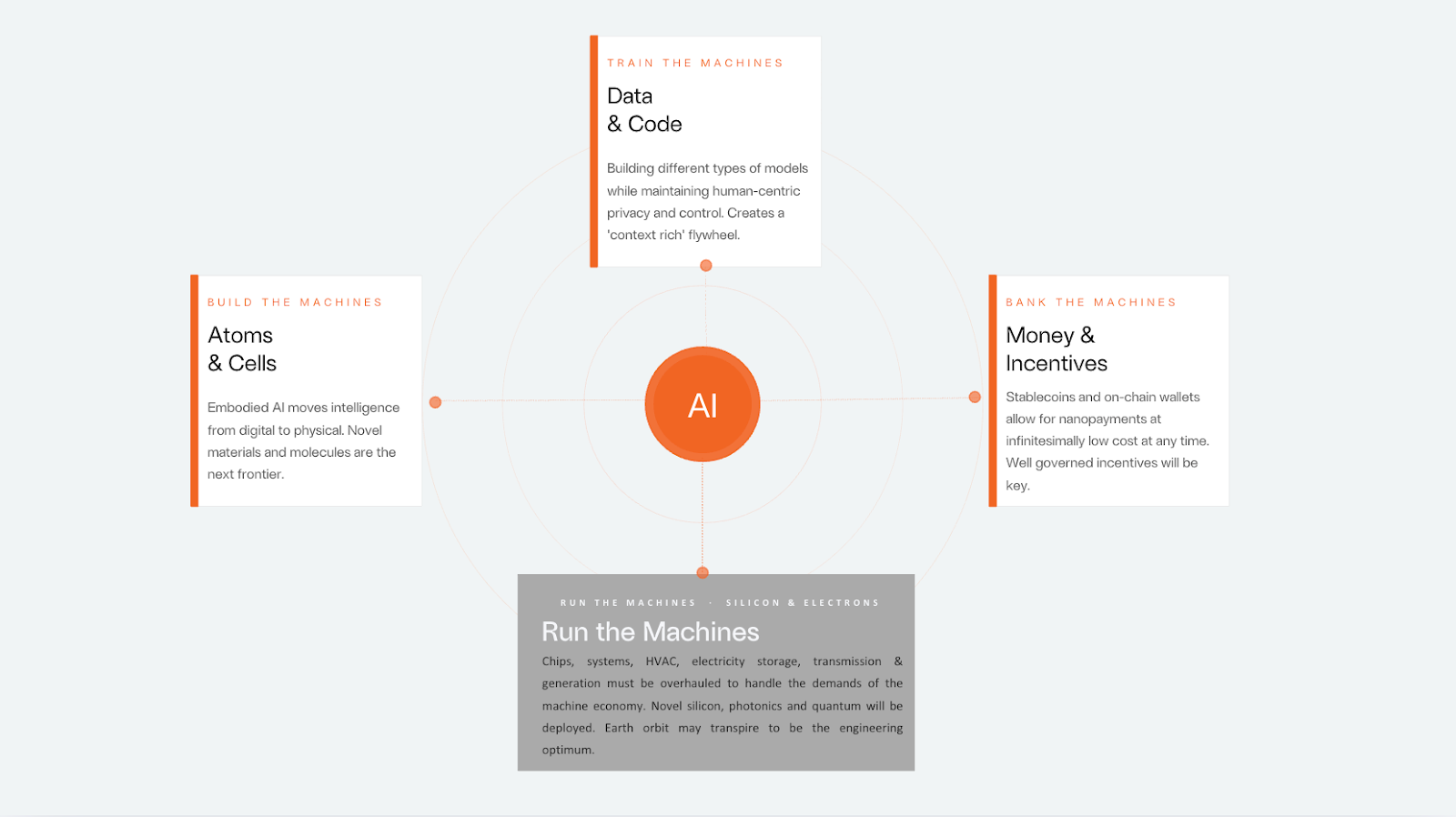

How to build, train & bank the machines

Abstract

The world is transitioning from an economy bounded by human toil to a Machine Economy where economic output scales autonomously through AI agents. Three technology inventions are converging to make this possible: Specialised Accelerated Compute (the hardware for intelligence), Large Language Models (reasoning and autonomous action), and Programmable Blockchain Networks (financial coordination at machine-speed). The defining product of this new environment is not an application, it is an ephemeral intervention calibrated to a specific moment: call it 'product:moment' fit.

Today's financial infrastructure wasn't built for this. Settlement cycles, custody rules, payment rails were all designed around human timescales. In a machine-speed economy, value will accrue not to labor or capital, but to deployed agency: the capacity to act autonomously within a self-improving system.

The machine economy is what emerges when data informs, silicon thinks, models reason, money moves, and atoms enact. Fabric Ventures foresees significant venture opportunities in the applications and particularly the coordinating infrastructure that this shift requires.

No ‘app for that’, or even ‘agent for that’.

Our economy underpins both human progress and peril. Humanity is now entering the era of the machine economy. We are now entering a new phase: The Machine Economy, in which autonomous AI agents — not human workers — become the primary actors, acquiring resources, transacting, and compounding value without human involvement at each step. Economic output is no longer bounded by human toil.

This will reshape society over the coming decades. Fabric backs the architects of this transition: the builders of digital infrastructure and the applications that run on it, in a world that is machine-driven but yet human-centric. This is an evolution of our longstanding focus on the open economy, an economy that protects individual sovereignty of identity, money and data, and keeps opportunity open to all. AI was always the prime application for this vision. We considered calling it the ‘AI economy’ or the ‘agentic[1] economy’, but settled on ‘machine’. Words matter : the Greek root mēkhanēs from which we inherit 'machine' carries the sense of a ‘contrivance that overcomes constraint’; something the Romans crystallised in the Latin ‘machina’, a ‘divine problem solver’. The Romans literally employed a crane that allowed a ‘god’ to descend to the stage and intervene to resolve the intractable plot of a play. Thus, Aphrodite rescued Paris from Menelaus mid-battle, the Great Eagles rescued the Hobbits from Mount Doom and (unintentionally) bacteria rescued humanity from the Martians in ‘War of the Worlds’. This is the ‘deus ex machina’. The crane has been rebuilt in silicon, at civilisational scale, and the gods now descending are autonomous agents.

The defining output of this machine economy is not an application. It is an intervention, expressed as a hyper-contextualized, intimate experience assembled on the fly, arriving precisely when needed, dissolved once its purpose is served. Responding to continuous personal context, a ‘melody’ of alerts, tones, buzzes, voice and images is composed in real time by autonomous agents, purpose-built for a single moment. . Open-source components will be reused; any code required for their function or integration will be written and executed only once. The underlying application may exist for forty minutes or seconds, and never recur. This is not delivered by a single platform, protocol, or product, in any sense we currently understand. In fact, it will be best delivered, i.e. safely and aligned with human interests at every scale, by the collaboration of many such systems based on the adoption of open standards and technologies.

This is a fundamental departure from how technology has been built and delivered for the past five decades. Today’s applications arise from months or years of experimentation in search of product:market fit and are designed once and distributed to millions. They are static architectures wrapped around general assumptions about users, configured marginally at the edges. In this world of ephemeral experiences, there is no need nor space for planning; for passwords; for SaaS, for app stores, or even for mobile devices as we know them. All devices will collaborate continuously to deliver what is needed with the minimum possible conscious human involvement. This dislocation will reverberate back up the innovation cycle as an industry adjusts from today’s assiduous hunt for product:market fit to a new role overseeing AI agents as they swarm together around a new goal: ‘product:moment’ fit.

The long-sought ambition of reaching a "market of one" has been leapfrogged. We now deliver to a market of one moment, with needs assessed and addressed in real time. . This flattens the product management process as we know it. And the supply chains behind it, with very significant consequences. This does not merely apply to software, but also those for goods, products, and services. When applications exist for minutes rather than years, economic coordination cannot rely on contracts negotiated between firms. It must be automated, programmable, and machine-native.

The oft-cited Jevons paradox offers a necessary corrective to the most common anxiety about this transition. When coal became cheaper and more abundant in nineteenth-century Britain, consumption did not fall, it expanded manifold, as cheap energy made economically viable an entire universe of applications that had previously been impossible. The same logic applies here, with greater force. Ubiquitous machine intelligence will not reduce the demand for human judgment; it will dramatically expand the analytical surface area that requires it. Intelligence abundance will do the same: compressing the cost of execution while making the question of what to pursue, and why, and how, more valuable than ever. In a world where any sufficiently specified task can be delegated to an agent, the scarce and therefore precious resource is no longer capability, it becomes direction.

In the machine economy, smart systems operate in real time. A digital helper uses live information, gets computing power, figures out what to do, writes new code if needed, uses online services, makes deals, and works toward an outcome - not just sending a notification, but taking real action. Each intervention fits its exact moment in a way no pre-made product can.

The efficiency gains compound upstream. More than 80% of current codebases[2] exist to handle requirements beyond the immediate need[3]. Up to 45%[4] of code is devoted to integrations with other software — something machine parties can now negotiate directly. A further 30–50% of SaaS costs[5] go to security auditing and bug-fixing. With purpose-built, self-constructed code, vulnerabilities and technical debt become largely historical concerns: the security arms race will unfold in real time, continuously, rather than in periodic audits.

Consider what this means for product roadmaps, sprints, A/B testing, and the entire product management stack built over months or years – all compressed into a forty-minute application used at 100% efficiency because it was built for one user, for one use.

In a world where applications exist for forty minutes and never recur, the mechanisms through which trust is established, quality assessed, and disputes resolved must themselves be machine-native. Discovery cannot entail interpreting a search result or an app store rating, it must be reputation encoded onchain, derived from verifiable prior performance across relevant counterparties. Quality scores become real-time attestations rather than retrospective reviews. Dispute resolution, rather than requiring courts or arbitration panels, becomes a programmable process where evidence is submitted, adjudicated, and settled within the same session. ERC-8004 and its successors point toward this architecture: a reputation layer allowing agents to carry verified track records into every new counterparty relationship, as naturally as a professional updates a CV or their LinkedIn or Github.

Unpacking Some Examples

Consider what these experiences might look like across sectors.

A vehicle navigating flash flooding reroutes using live satellite and sensor data, negotiates priority access to a private toll road via a smart contract, bundles its routing telemetry with two hundred other vehicles to sell as a real-time flood map to the local emergency authority, and settles all payments, data licences, and routing fees autonomously before the driver reaches their destination. No human designed that application. No app store carries it. It existed for forty minutes and dissolved.

In retail finance, an agent detects a micro-arbitrage opportunity across decentralised exchanges, assembles a transaction strategy, it requires a certain volume to secure it and a burst of compute. The agent aggregates this volume without leaking the opportunity specifics, borrows money to secure the compute and then executes the entire transaction workflow across three liquidity pools. In the background it hedges the position, and then finally closes the position, all within a single block confirmation.

In home productivity, a domestic robot identifies a plumbing leak, identifies and learns the language of the home’s pumps, thermostats & control systems and builds it natively into its code-base; cross-references repair manuals and the home’s architectural plans, orders a replacement part, negotiates same-day delivery pricing; walks the homeowner through the repair with augmented reality guidance (at a convenient time it prebooks in their calendar) and updates the home appliances ‘manual’, a resolution that will never be needed again.

In medicine, a collectively-owned AI system analyses a patient’s genomic profile, identifies a novel therapeutic target that no existing drug can address, designs a prototype molecule, and auctions the insight to a pharmaceutical company. The patient's genetic data remains under their sole control, with consent and any compensation flowing back to them enforced onchain. The winning bidder refines the molecule into viable candidates and simulates binding behaviour against the target protein, advancing a treatment pathway from insight to pipeline in a single automated sequence.

These are not speculative futures. Each capability exists in early form today. An integrated infrastructure is developing such that these applications can now emerge, run and settle autonomously at the speed, scale, and safety required.

Increasingly efficient markets are created everywhere. To use the classic example of ride hailing, your personal agents have complete context for your next journey: location, time, attendees, traffic, other tasks en route and can negotiate with Gett, Jump, Wheelie, Uber and the Tube to secure the car and cancel the losers. At what point do drivers no longer need the user aggregation services but simply allow their ‘booking agent’ to negotiate directly with a riders ‘productivity agent’? That new architecture is the basis for the machine economy, and made possible by three converging inventions.

From the Information Economy to the Machine Economy

We are entering the fourth great era of progress. First: success in the agricultural economy was driven by land ownership, i.e., what you own and your output per acre. Second: success in the industrial economy was dominated by the automation of labour, i.e. what you do and your production per person. Thirdly, today, we are primarily living through the information economy, where the power resides in harnessing data, i.e. what you know and insight per bit. Each new form of human economy has been catalysed by a single or a series of great inventions.

The last great step change in the information age was the Internet. This was fundamentally one invention: networked computing. Following which, it took just thirty years to reshape every industry on earth.

What is different today is that three independent inventions of at least that magnitude are maturing at the same time, one in computing, one in AI, and one in money.

Specialised Accelerated Compute. Central Processing Units (CPUs) reigned for 30 or more years. GPUs were built to render pixels in video games. It turned out that massively parallel processors are excellent miners for Bitcoin and are now essential to factories for artificial cognition. NVIDIA’s market capitalisation went from around $300 billion to $4.5 trillion in three years (the ‘great flip’) because the world realised we had fortuitously built the silicon substrate for machine intelligence. This is the raw capacity to train, think, reason, measure and react at scale.

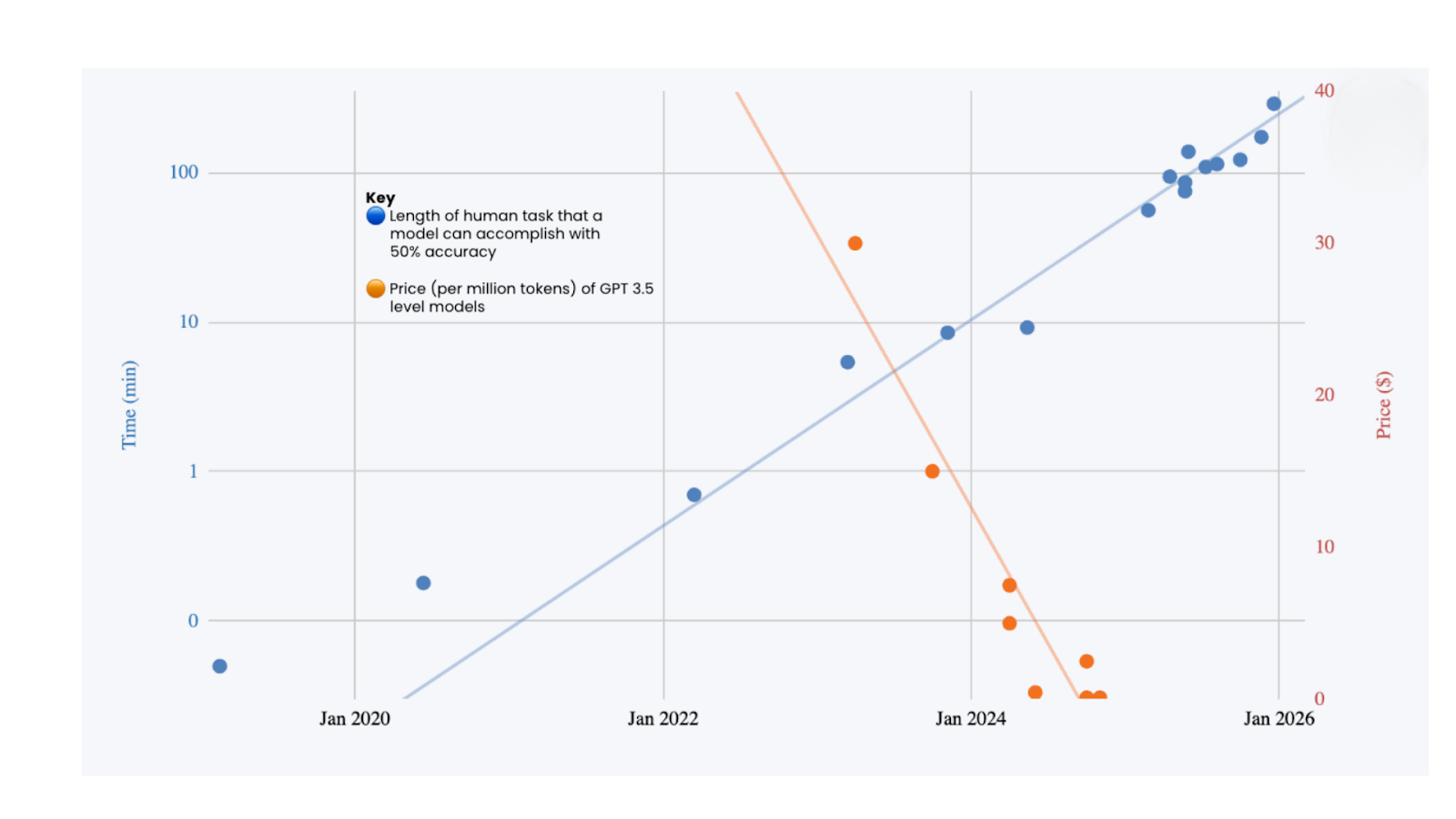

Large Language Models. We discovered that if you feed enough data through enough parameters, general-purpose reasoning emerges. This was not engineered top-down; it was discovered. GPT-2 couldn't finish a sentence. GPT-4 passed the bar. That was two years. Today, the frontier has moved somewhere stranger still. The hard ceiling of human intelligence — the Einstein, Hawking, Copernicus ceiling — is an IQ of around 160. Current frontier models have broadly surpassed it. Claude Opus 4.5 delivers consistent performance through 30-minute autonomous coding sessions, achieving 80.9% on SWE-bench and resolving four in five real production bugs without human involvement (without sleep). Google DeepMind's Gemini Deep Think achieved gold-medal standard at IMO 2025 solving five of six problems in algebra, combinatorics, geometry, and number theory within the competition time limit — working entirely in natural language. GPT-5.2 outperforms industry professionals across 44 occupations at over 11x the speed and under 1% of the cost. Grok 4 Heavy is the first model to score 50% on Humanity's Last Exam, a benchmark designed to be the final closed-ended academic benchmark of its kind. . All these benchmark achievements were viewed as implausible in 2020. These foundation models are a new kind of artefact: trained intelligence that can be copied, composed, and deployed anywhere, with inference-time marginal costs plummeting to near zero. This is before hard-coded specialised inference silicon. Agents and physical embodiment add action to this insight, closing the loop for further self-refinement of their capabilities.

Programmable Blockchain Networks. Money is humanity's coordination and time-shifting technology - built for humans, not machines. Do you really think the Machines will use our slow, archaic banking system to transact with each other? No – they’ll use blockchains. The most powerful innovations in history have emerged from open, permissionless systems: the internet, open-source software, and now programmable blockchain networks of value that anyone can build on, compose with, and extend without seeking permission. Stablecoins alone settled $27.6 trillion on-chain in 2024, rivalling Visa and Mastercard combined, and regulatory clarity will accelerate that trajectory further. Blockchains are the systems that allow software to safely make and keep financial promises without intermediaries: not simply payments, but irrefutable ownership, contractual commitments, and complex coordination of activities and resources, all enshrined in code. Through onchain identity, reputation, and ultimately programmatic credit and liability (see emerging standards like ERC-8004) we can achieve levels of trust that will deliver social legitimacy. But the deeper transformation is structural. When the fixed costs of market-making collapse, markets appear wherever there is demand: revenue-sharing between creators and audiences, parametric insurance triggering on verifiable data, fractionalised ownership of physical infrastructure. The long tail of economic activity (too small, too complex, or too geographically dispersed to justify traditional financial plumbing) becomes organisable for the first time. Composability compounds this further: trust and reputation earned in one context become portable capital in another, just as TCP/IP made every application built on it interoperable by default. Autonomous agents cannot open bank accounts or extend credit through personal relationships, they need financial infrastructure that is natively machine-readable, deterministic, and auditable with no human in the loop. Nation-state sovereign AI also requires sovereign financial settlement: the ability to pay for compute, data, and services without routing through foreign intermediaries, which is precisely why governments from Singapore to the UAE to the United States now treat digital asset infrastructure as a strategic priority rather than a regulatory problem to be managed. The rails being built now are laying the foundation for an economy structurally larger than the one we have. Forget ideology, or even ‘just’ the reinvention of finance: programmable value networks are pivotal horizontal infrastructure, making public markets possible wherever there is demand and, crucially, legitimate, because when anyone can audit the rules and verify the execution, the integrity of the system becomes intrinsic rather than delegated.

Any two of these inventions without the third, and you hit a ceiling. Compute power and models without programmable value networks produce brilliant intelligence with no way for autonomous agents to transact, own, or coordinate economically. Think GPT behind an API paywall, with economic transactions bottlenecked through Stripe and human-readable code – it remains a philosopher in a box without the power to transact. Models and blockchains simply cannot exist without the data centres to train them, the low-latency compute to run inference, and the tamper-resistant, global consensus that underlies machine-level payment rails. Computers and blockchains without models deliver raw power and inert settlement infrastructure with no intelligence to direct or allocate either.

The convergence of these inventions will bring us into the 'machine economy’. As we move beyond the information economy, the machine economy marks the rise of capability, i.e. what can be done autonomously. Value accrues not to human labour hours or physical capital, but to deployed agency. To autonomous systems.

Transformation Across Three Critical Arenas: Build, Train, Bank the Machines

These intersecting exponential innovations are the drivers for this new age of progress and demand retooling across three critical arenas. The Machine Economy will not be owned by one single or even several platforms. Its greatest strength lies in its openness and interoperability. It will be composed of thousands of specialised protocols and agent-native infrastructures. Machines do not need UIs or even APIs, they can communicate and coordinate resources to the protocol layer and re-architect themselves at the binary level as they go. The DePIN networks, AI compute markets, agent identity systems, programmable credit networks, on-chain reputation and data markets seen to-date are but an inkling of what is to come.

We believe that each of these arenas offers opportunities for young companies and projects that can blossom into truly enduring endeavours. Identifying these early is the role of venture capital. Fabric Ventures exists to meet them and accelerate their success.

Build the Machines

Silicon & electrons are the substrate on which intelligence runs. GPUs, integrated accelerated compute systems, advanced data centre cooling, and digital power systems are the factories for cognition. At any point in time, one or more of these physical resources is driven into scarce supply by the sheer level of demand. Whoever controls compute capacity controls the rate at which the machine economy can expand in both scale and scope. We have seen that even a humble chat interface to simply software-instantiated intelligence behind a subscription paywall can be a runaway success. Deeper integration into coding, productivity workflows and business processes are generating yet further value. Atoms & Cells are the interface with physical reality. This extends far beyond robotics (assembled under human direction) into any domain where machine intelligence directs the arrangement of matter. It spans from industrial robots assembling vehicles to AI systems designing novel crystal structures for next-generation batteries, to generative models inventing drug molecules that have never existed in nature.

In January 2025, MatterGen from Microsoft was the first AI to design novel materials to specification rather than screening known ones. It synthesised a new compound, TaCr₂O₆, for robotics with 90%+ accuracy. In a couple of decades, we’ll likely have room-temperature superconductors, at which point many of today’s compute constraints will no longer apply.

In late 2025, A-Lab AutoBot in Berkeley showcased a self-driving lab that now designs, synthesises, and tests new materials autonomously, achieving a 71% success rate with no human input. Current focus: battery chemistry. Coming in 2 to 3 years, an AI-designed battery that dissolves the primary hardware constraint on mobile robots.

In medicine, in October 2024, AlphaFold 3 from DeepMind secured the Nobel Prize when it extended its predictions from proteins to the full molecular interactome: DNA, RNA, and small molecules. The parts list became the instruction manual. The first AF3-designed drug will be through Phase II trials within 3 to 5 years. Beyond that, whole-cell modelling will provide the longevity experiments with a solid scientific foundation.

Google DeepMind’s GNoME system predicted 2.2 million new crystal structures, of which 380,000 are expected to be stable. This is equivalent to roughly 800 years of human materials discovery. Insilico Medicine’s rentosertib became the first drug in which both the biological target and the therapeutic compound were discovered using generative AI, entering Phase IIa clinical trials in 2025.

The atom & cell layer is where the machine economy escapes the screen and enters the world. It is arguably an even harder layer to scale than the power of data centres, because it is stubbornly subject to Newtonian (or Quantum) physics, not Moore’s Law. Yet, it is happening.

Train the Machines

Models & agents are the new labour force. Foundation models represent trained intelligence: algorithms plus weights that encode general-purpose reasoning. Unlike human labour, they scale at near-zero marginal cost once trained, but the training itself is capital-intensive and path-dependent. We do not believe that LLMs are the end of the road, but rather that new domains, use cases, and outcomes will require different architectural approaches, such as world models, recursive language models, and reasoning & memory that can persist at a larger scale and for ever-longer time periods. In the machine economy, models are simply tools to support human investigation. They become markedly more potent when expressed through autonomous economic agents capable of making decisions, negotiating agreements, and taking action on their own behalf, in response to specific incentives. Data & code are the oxygenated lifeblood and logic of this system. Data is the raw sensory input that feeds model training and real-time inference. Code is the executable logic that provisions GPU allocation, orchestrates across APIs, and provides encryption for privacy, security, and control over data sharing. Critically, whomever owns and controls the data pipelines and code repositories influences the behaviour and values of these systems and possesses significant leverage to capture value.

Bank the Machines

Money & payments have always been the coordination signal: programmable value networks, or blockchains, are money that moves at the speed of machine decision-making, not human settlement cycles. Energy, expressed as value, can now travel around the world at the speed of light and negligible cost. Stablecoins, wallets, smart contracts, and token protocols form the nervous system through which machines coordinate economically. With these interfaces to economic reality, models can act in the financial world. They can secure resources, earn their own money, build their own credit record, be held accountable and use these to drive their own self-improvement.

The Self-Improving Flywheel

With all three inventions in building, training and banking the machines maturing concurrently, a self-improving loop emerges. Silicon trains models. Models become autonomous agents that generate demand for programmable money to transact and coordinate. Data trains better models. Better models drive demand for more compute. Capital flowing through programmable value networks funds the next cycle of GPU deployment. The triangle does not merely catalyse, it compounds over time.

On-chain and model activity generate data. The data & code generated by each transaction, each sensor reading, and each model inference flow through the system, enriching the next generation of training, and new code can be reused in software generation. Atoms & the physical systems deployed by intelligent agents, from DePIN infrastructure to autonomous laboratories synthesising novel materials, push the frontier of computing and interactions with the physical world, and the cycle accelerates.

So, Why Must Today's Financial Systems Be Rebuilt?

Traditional financial rails, which were built for humans signing documents and banks reconciling ledgers overnight, cannot serve as the economic backbone of a system where millions of tiny, fleeting, spontaneous transactions occur every minute. What high-frequency trading (HFT) proved is that machines can trade rapidly inside a closed market structure. It did not prove that a general machine economy can run on centralized rails.

An AI agent cannot open a bank account,wait three days for an ACH transfer to clear, or fax a signature, and it doesn’t need to. It can hold a wallet, sign a transaction, and settle a payment in the time it takes to complete a single inference. Additionally, these digital artefacts and capabilities are best harnessed via an API or RPC call, rather than a user interface operated by an easily distracted, error-prone human. Traditional financial instruments cannot denominate ownership of a forty-minute data licence or a fractional share of a dynamically composed AI inference pipeline. The machine economy is cross-venue, cross-asset, cross-border, and 24/7. Agents need to carry money, permissions, and rights across unknown counterparties and software environments without re-onboarding into a new intermediary every time[6].

Native digital assets can. These assets in the machine economy must be as fluid, composable, and granular as the system they coordinate.

Blockchain has generated 29 million (at last count) entirely novel borderless assets or “tokens” primed to bootstrap communities through speculative activity. In this experimental, at times gambling, phase of experimentation it has led to innovations in loans, markets, and data infrastructure worldwide, and, with stablecoins, has brought the world's dominant trading asset, the US dollar, onchain. Many more assets will now follow. Yet, the most common objection to including blockchains within this framework is that they are hard to use and the front-end experience is bad: their human user adoption has been so slow to date. This misses the fact that 80% of DeFi transaction volume is non-human and fundamentally mischaracterises the real opportunity: machine adoption. Blockchains (and the technologies they have swept along with them) were always built for agents. And robots.

The machine economy operates at a transaction velocity many orders of magnitude beyond that for which the existing financial system was designed. The human economy will comprise less than a thousandth of the entire future economy. Not merely by transaction volume, but also by value. When autonomous agents are negotiating data licences, purchasing compute resources, settling micro-payments for sensor access, and coordinating logistics across hundreds of counterparties - all within seconds - the settlement infrastructure must operate at machine speed.

Programmable value networks are not merely faster pipes for moving existing money. They are a new kind of economic infrastructure that embeds ownership, commitment, and coordination directly into code. A smart contract is not merely a mechanism to support machine payments; it allows software to make and keep a wide range of promises, relating to identity, information sharing, or resource provisioning, all without human intervention. This is the defining characteristic of the Ethereum white paper, further refined in the construction of the NEAR blockchain for AI use cases: an internet where individuals and their aggregations own their identity, their value, and their data. What has changed since 2014 is that the “individuals” now include autonomous agents, and the “aggregations” include swarms of AI systems coordinating at machine speed.

But speed and programmability alone are not sufficient. The assets circulating within these value networks must be native to the digital environment, not tokenised representations of assets that ultimately settle in a legacy system. They must be digital-native tokens that natively reflect the value being created with the granularity and pace that the machine economy demands. When an ephemeral application assembles itself from six data sources, three compute providers, and a real-time sensor network, the value created and exchanged must be digitally reflected at the resolution of each micro-contribution. These new machine capital markets will serve agents with capital, credit, insurance and liquidity such that agents can borrow, put staked tokens to work, hedge risk and secure and allocate compute. For this to work agents must have identity, reputation and be accountable and hence open to liability and subject to dispute resolution.

This is why the trajectory of blockchain adoption has been expanding outward from finance, not narrowing. DeFi established that programmable money works. Stablecoin transaction volume is accelerating to a monthly forecast of $1 trillion in December 2026. DeSci is applying programmable value to scientific funding and coordination. Decentralized Physical Infrastructure Networks (DePIN) is using token incentives to build and operate physical infrastructure. Decentralized AI (DeAI) is creating a decentralised AI compute market to maximize energy efficiency. The emerging agentic economy, that of AI agents transacting, staking, and coordinating on-chain, represents the next and most consequential expansion. Each of these is not a separate use case; they are expressions of the same underlying thesis: the first time software can make and keep financial promises is also the first time autonomous machines can participate in an economy.

Without programmable value networks, the flywheel splutters. Compute is being manufactured and assembled, but cannot be dynamically allocated and priced by agents. Models reason but cannot transact on their conclusions. Data and intellectual property flows but cannot be licensed, valued, or compensated at the micro-level. Physical systems operate but cannot coordinate without human gatekeepers at every junction. Programmable value networks are not one option among many for the machine economy’s financial layer; they are uniquely designed and capable of ongoing adaptation to it. Tokenisation is effective not merely for existing financial assets but also for intellectual property and the rewards for contributions of resources and activity. As such, tokens are the coordinating and resource-allocating force without which the engine does not run. The manifestation of Adam Smith’s digital invisible hand is now cost-effective to deploy in digital marketplaces for everything, everywhere. An improvement on capitalism, not through less of it, but through more of it and more evenly spread.

The pattern is consistent: wherever a human intermediary currently coordinates information, negotiates terms, and settles an outcome, an agent-native alternative becomes viable. Legal discovery, agricultural yield optimisation, education assembled in real time around a student's demonstrated gaps, energy grids balancing across millions of distributed sources. Each follows identical logic. Each are already well underway. The flywheel does not respect sector boundaries. What varies is the speed of arrival: determined by data density, model maturity, and the regulatory friction that must be navigated. The question is not which domains transform, but in what order, and what governs the sequence. That is what the roadmap addresses.

The Road Map

The Machine Economy will not arrive all at once. Even when the technological capabilities exist, there is time and friction to permeate the existing system. This evolution assembles itself across three distinct phases, each unlocking the next, each constrained by a small number of specific bottlenecks that research and capital are already targeting: The Proving Ground, The Infrastructure Build-Out, and The Launch.

Stage One : The Proving Ground (the coming years)

The immediate period is not about invention. The inventions are largely in place. It is about agents learning to work, and infrastructure learning to trust them. The binding constraints right now number three:

First, long-term memory: today's agents are amnesiac; they reset between sessions, incapable of building the longitudinal context that genuine economic agency requires. Persistent, structured memory architectures (e.g., Mem0[7]) demonstrate a 26% improvement in long-horizon reasoning accuracy, but production-grade deployment remains nascent. An agent who forgets cannot build a reputation, credit, or a relationship. These are the preconditions for autonomous economic participation.

Second, settlement infrastructure: stablecoins and onchain payment rails are scaling fast, but agent-native wallet standards, programmable credit, and identity primitives (EIP-4337 with 26 million smart wallets and counting, W3C Verifiable Credentials 2.0, ERC-8004 and its successors) need regulatory clarity and adoption before autonomous multi-party transactions can operate at scale without human co-signatories. This is also the point at which any comparison with HFT breaks down: HFT automated trading inside closed venues; the machine economy requires open settlement across unbounded counterparties.

Third, physical clumsiness: humanoid and robotic systems remain limited to semi-structured environments. Battery life sits at 2–4 hours, well short of an operational shift. Dexterous manipulation of novel objects remains unreliable.

It is worth registering the most credible sceptical voice on this last constraint. Rodney Brooks (co-founder of iRobot, former director of the MIT AI Lab) argues that humanoid adoption timelines are being overstated by CEO enthusiasm by orders of magnitude, and that dexterous manipulation remains technically harder than the demonstration videos suggest. He notes that no technology in history has ever scaled at the rate humanoid-robot company executives publicly project, and that the gap between controlled-environment demos and reliable real-world deployment is routinely underestimated. This is not a counsel of despair but a useful calibration: the physical layer of the machine economy will arrive on an engineering timeline, not a fundraising one. The roadmap here attempts to account for that friction.

By the end of Stage One, expect: the first commercially autonomous agent-to-agent economic transactions settled without human approval; persistent agent memory as table-stakes infrastructure; and humanoids deployed at scale in structured logistics and light assembly. The product:moment fit paradigm is also beginning to replace the product:market fit paradigm in digitally native verticals, such as finance, data licensing, and software development.

Stage Two : The Infrastructure Build Out (the next decade)

This is the phase in which the flywheel begins to visibly compound. Three breakthroughs converge to transform pilots into platforms.

Memory becomes pervasive. Agents develop persistent, cross-session, cross-counterparty memory with privacy preserved. This is the machine equivalent of professional experience. An agent that has executed ten thousand logistics negotiations carries that context into the ten-thousand-and-first. This is not a metaphor for better prompting; it is the technical precondition for trust, specialisation, and compounding capability. The landscape shifts from "what can an agent do in one session" to "what can an agent become over time."

Reasoning scales with context. Long-horizon planning, currently limited to tasks measurable in minutes or hours, shall extend to projects spanning weeks and months. World models, recursive architectures and persistent chain-of-thought begin to give agents the capacity for genuine strategic reasoning rather than sophisticated task completion. The distinction matters: a task-completing agent is a tool; a strategically reasoning agent is an economic participant.

Token-inspired, capability-driven pricing emerges as the market mechanism. Rather than flat SaaS subscriptions or hour-based billing, intelligence begins to price itself on demonstrated outcomes: a negotiation agent charges a percentage of the value created; a drug discovery agent's compute is funded by onchain IP tokens released upon a trial milestone. Research already shows that the relationship between model intelligence and cost is log-linear, i.e. incremental improvements in intelligence command nonlinearly higher prices[8]. As benchmarks proliferate and agent track records accumulate on-chain, this dynamic pricing becomes the dominant commercial model. Adam Smith's invisible hand, finally encoded.

On the physical side: battery technology should reach six-hour operational capacity by 2030, though a full eight-hour shift may remain elusive[9]. The humanoid robot market is forecast to reach $6 billion by 2030, with shipments of approximately 136,000 units[10]. Sufficient for meaningful deployment in manufacturing, logistics and elder care, but nowhere near mass saturation. Robot prices are targeted to fall from above $75,000 today to the $20,000 - $30,000 range by 2030[11], driven by Chinese manufacturing scale and component standardisation.

The critical unlock of Stage Two is not any single technology. It is the moment when onchain reputation, persistent memory, and outcome-based pricing combine to allow an agent to earn its own capital, deploy it, and compound it. With humans fully taken out of the loop, this becomes the ignition point for the age of deployed digital agency.

Stage Three : The Launch (the coming decades)

By 2030, the component parts exist. What Stage Three delivers is their full systemic expression, the self-improving flywheel operating at civilisational scale.

Artificial dexterity reaches human parity for most manipulation tasks. The global humanoid robot market is projected to exceed $51 billion by 2035, with over 2 million units shipped annually[12], and average sale price declining toward $25,000. The atoms-and-cells layer of the machine economy, that is physical robots synthesising novel materials, running autonomous laboratories, operating in domestic and medical environments, will cease to be a prototype domain and becomes infrastructure. The broader robotics market is projected to reach $150 billion by 2030, doubling from its current scale[13], with the market for general-purpose systems extending well beyond that by 2035.

AI-designed matter enters the physical world. AlphaFold 3's Nobel-winning molecular modelling, GNoME's 2.2 million predicted crystal structures, and MatterGen's novel compound synthesis are beginning to produce outputs that are ready for commercial and clinical deployment. The first AlphaFold-designed drug is to reach Phase II trials within this window. An AI-designed battery chemistry (dissolving the primary energy constraint on mobile robotics) is the most consequential single unlock of the decade, and autonomous laboratory systems are actively targeting it now. All this being dependent on long-horizon reasoning continuing to progress through benchmarks at its current rate.

The transaction economy inverts. By 2035, machine-initiated transactions, i.e. agents purchasing compute, licensing data, settling micro-payments, and staking capital. are projected to dwarf human-initiated ones in volume and, plausibly, in value. The human economy, as argued earlier, becomes a fraction of a per cent of total economic activity by transaction count. Financial infrastructure built for human latency (overnight settlement, KYC for individuals, corporate treasury functions) is not upgraded. It is replaced. Although, government latency on legal clarity on personhood for agents and regulatory clarty on agent-native wallets and the like might yet prove a ‘spanner in the works.’

The question this roadmap leaves open is alignment and governance. The technical trajectory is reasonably discernible. What remains contested is who directs the flywheel, on whose terms, and toward which ends. That is not an engineering question. It is the central political and philosophical question of the coming decade. The machine economy will assemble itself on the timeline above with or without a considered answer. The cost of leaving it unanswered is, however, asymmetric.

Conclusion

We have arrived at our destination. Data informs. Silicon thinks. Models reason. Money moves. Atoms enact. The machine economy is not a platform to launch or a protocol to adopt. It is a self-improving system that compounds with every inference run, agent transaction settled and autonomous lab result logged onchain.

The flywheel compounds. The question is how well we direct it to solve the problems that count, and in a manner that serves humanity.

The critical lesson of the past decade of crypto and blockchain is that finance was always the foundation, never the destination. Every use case that has worked at scale has used financial primitives as its coordination layer. That is not a limitation; it is the mechanism by which this new economic engine bootstraps itself. Programmable value networks are the coordination and resource-allocation layer without which the machine economy’s flywheel cannot turn. The assets within them must be as adaptive, composable, and granular as the ephemeral applications they enable. Nothing else can keep pace.

Fabric Ventures's conviction is that durable value will not accrue to any single model or application: both are commoditising faster than most investors have registered. It will accrue to the coordination infrastructure beneath them: programmable capital markets, machine infrastructure networks, and agent trust systems that let agents transact, be trusted, and compound. We have backed founders across multiple waves of picks and shovels. This gold rush will dwarf anything before it.

Which brings us back to the ‘silicon crane’.

The deus ex machina has traced a long and distinct arc through the literary imagination. From unquestioned divine rescue, through moral dilemma, to rescue as illusion, and finally rescue as weapon. The statistics are not comforting: when the crane drops the deity into Act III, it ends well for the hero three times in four.

Dickens understood both outcomes of divine intervention with characteristic warmth and menace. When the long-lost relative descends in Oliver Twist or Great Expectations, beit Brownlow rescuing Oliver from the magistrate's bench or Miss Havisham's fortune reshaping Pip's existence, the intervention is simultaneously salvation and distortion. The beneficiary is elevated, but not without cost to their autonomy and their understanding of cause and effect. Dickens' machines are benevolent but not neutral. They reshape the recipient even as they rescue them.

Kafka's vision is darker and more contemporary. In The Trial, the agents of execution are not cruel. They are simply executing a process: diligent, polite, unstoppable, and entirely opaque as to their instruction. Josef K. never learns the charge. This is the machine economy's most plausible failure mode. It is already visible in the algorithmic systems determining credit scores, content visibility, and hiring outcomes today. Not an evil villain. Simply agents optimising for objectives never fully specified, accountable to no one.

The difference between the Dickensian outcome and the Kafkaesque one is not technical. It is architectural. Will the agents operating in this economy carry verifiable identity, transparent objectives, and genuine accountability? Or will it operate, as Kafka's court operated, from behind a door that recedes the faster you walk toward it?

We are building and funding that architecture now. The narrow window in which we can define the starting conditions for this divine emergence will not remain open for long. The question is not whether the god descends. It is whether, when it does, it serves the people in the story or merely resolves the plot.

Three converging inventions. One self-improving system. A brief window to get it right. The Machine Economy is already upon us.

—-----

With thanks to Ian Emerson, Lata Persson, Michael Jackson, Mike Hearn, Christina Frankopan, John Fiorelli, Charlie Muirhead, Camilla McFarland, Danielle Walsh, Eleanor Hudson and Thomas Crow.

—-----

References

- Mike Hearn's January 2012 definition of an 'agent' for the Bitcoin community. https://en.bitcoin.it/wiki/Agent

- Pendo Analysis https://www.pendo.io/resources/the-2019-feature-adoption-report/

- Gartner https://www.silicon.eu/saas-licences-30-of-them-go-unused-8529.html

- CIO Magazine https://www.cio.com/article/270487/enterprise-software-the-roi-of-application-integration.html, Adalo https://www.adalo.com/posts/legacy-api-integration-statistics-app-builders and AppVerticals https://www.appverticals.com/blog/software-development-cost-estimation/

- CloudQA https://cloudqa.io/how-much-do-software-bugs-cost-2025-report/

- Bank of International Settlements https://www.bis.org/publ/arpdf/ar2025e3.htm

- Mem0 website https://mem0.ai/

- Andrey Fradkin https://andreyfradkin.com/assets/LLM_Demand_12_12_2025.pdf

- Bain & Company https://www.bain.com/insights/humanoid-robots-from-demos-to-deployment-technology-report-2025/

- Edge AI and Vision Alliance https://www.edge-ai-vision.com/2025/11/humanoid-robots-2025-the-race-to-useful-intelligence/

- Robozaps https://blog.robozaps.com/b/future-of-humanoid-robots

- Edge AI and Vision Alliance https://www.edge-ai-vision.com/2025/11/humanoid-robots-2025-the-race-to-useful-intelligence/

- StartUs Insights https://www.startus-insights.com/innovators-guide/future-of-robotics-full-guide/

%20(4).png)